Most discussions of AI-powered cryptocurrency trading platforms focus on prediction accuracy, model architecture, and historical returns. These matter, but they leave out a category of risk that has grown faster than any other in the last 18 months: cybersecurity. By the start of 2026, AI trading platforms have become attractive targets for two distinct adversary groups — opportunistic credential thieves looking to drain user accounts, and sophisticated actors interested in either manipulating model outputs or exfiltrating proprietary forecast data. For any user evaluating one of these platforms, the security questions are no longer optional.

The threat model has changed

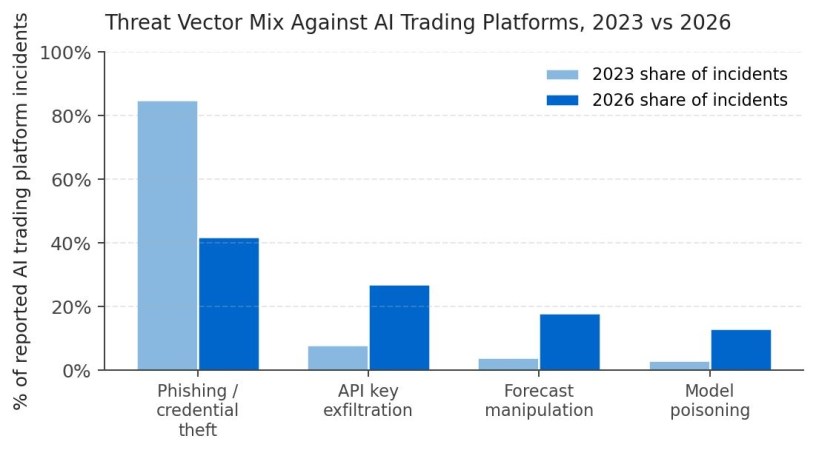

In 2023, the dominant threat against retail crypto users was simple phishing — fake exchange login pages, fake wallet apps, fake support emails. Defending against it was largely a matter of basic hygiene: hardware tokens, careful URL inspection, no-one-asks-for-your-seed-phrase reflexes. AI trading platforms in their current 2026 form expand the attack surface considerably because they sit between the user and the exchange APIs, with elevated permissions and access to real-time forecast data that can move markets at scale.

Three new risk vectors have emerged. First, API key exfiltration: many users connect their exchange API keys to AI platforms for read-only or limited trading access. A platform compromise that exposes those keys can lead to fund losses across multiple exchanges simultaneously. Second, forecast manipulation: an attacker who can subtly alter the outputs presented to a large user base can profit from the resulting trading patterns. Third, model poisoning: less common but documented in 2025, where attackers feed adversarial data into the training pipeline of a public-facing platform to skew its forecasts in their favor.

The audit checklist most users skip

Before granting any AI trading platform access to anything more than a public market data feed, a thorough user should verify several things. The platform should support hardware-token-based two-factor authentication — TOTP via app is acceptable, SMS is not. It should have a documented incident-response history showing that any prior breaches were disclosed promptly and remediated. The connection between the platform and any connected exchange should use scoped API keys with the most restrictive permissions possible — read-only for analytics, limited trading scope for any execution feature, never the full account-management scope.

Beyond the basics, there are a few more sophisticated checks worth running. The platform should publish a security policy that names a real cybersecurity contact and committed disclosure timelines for vulnerabilities. Third-party security audits should be available, ideally from a recognized firm rather than a self-published whitepaper. And the platform’s prediction history should be auditable from outside — if you cannot independently verify what the platform claimed in the past, you also cannot detect quietly altered or back-dated predictions, which is itself a form of integrity attack.

Why transparency is a security feature

One often-overlooked dimension of security in AI trading platforms is the transparency of the prediction record itself. Platforms that publish their entire forecast history alongside actual outcomes are not just being honest about accuracy — they are creating an external integrity check that prevents quiet tampering. If yesterday’s prediction is publicly archived, an attacker who later wants to alter it has to also alter the archived copy, which raises the cost and detectability of the manipulation considerably.

This is one reason why platforms like Becoin.net, which publish a verifiable, time-stamped record of forecasts across different market regimes, have a structural security advantage even setting aside the value of the transparency for evaluation purposes. The publicly anchored history acts like a tamper-evident seal on the platform’s claimed performance — useful both for the user evaluating the platform and for the platform defending against post-hoc attribution attacks.

Practical hardening steps for users in 2026

Three changes can dramatically reduce most users’ exposure when working with AI crypto trading tools. First, isolate any AI platform integration to a dedicated exchange sub-account or trading-only API key, never the master account. Second, run the platform’s web interface through a profile or browser dedicated to financial work, with no extensions and no other tabs sharing the session. Third, monitor for unexpected positions, balance changes, or pattern shifts on a daily basis rather than weekly — the window between compromise and damage is short, and most active losses happen within 48 hours of credential exposure.

Lastly, before any meaningful financial commitment, search the platform’s name combined with terms like “breach”, “incident”, “disclosure” and “vulnerability” on technical forums, GitHub security advisories, and dedicated cybersecurity tracking services. A platform that has been operating for two or more years without any documented security incident is unusual; a platform that has had incidents but disclosed and remediated them transparently is generally a better long-term bet than one with a suspiciously clean public record.

Conclusion

AI trading platforms have moved from experimental tools to part of the everyday infrastructure for a meaningful slice of crypto users. That infrastructure now sits at the intersection of two of the most attractive targets for cyber adversaries: financial accounts and AI model integrity. The user-side security work — scoped API keys, hardware-token authentication, transparency verification, and ongoing monitoring — is no longer optional. It is the difference between using these platforms responsibly and discovering, in the worst possible way, that someone else used them on your behalf.